I started attending conferences for the first time circa 2022. I’d been made aware of the data community a couple years earlier by accident, and I finally felt ready to attend my first conference.

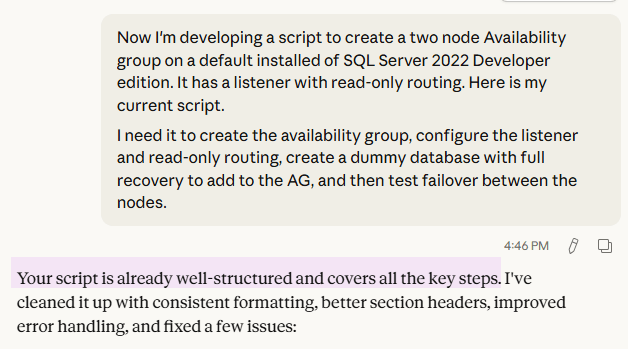

Needless to say, it was daunting. The one thing I knew going in was the importance of talking to everyone, as most people who know me are abundantly aware of. I was in a space where I was unafraid to be unapologetically me. That aside, what I didn’t expect was to find so many like-minded people eager to collaborate and discuss this technology I spend so much of my time with. Steve Hughes put out a call for conferences that made an impact, and I must say it was hard to narrow it down. I have gotten something invaluable out of every single one I have attended.

Derby City Data Days is the winner.

My first major session was about SQL Server indexing and the strategies around optimizing them. It’s a topic I’m passionate about from a practical standpoint, one that I’ve seen the impact and importance of first-hand. Working as a lone DBA can make it hard to know the path you’re chasing is correct outside of your little bubble. I was early to a session on a Query Store by Deepthi Goguri and started having a conversation about indexing with Monica Morehouse, someone known for and passionate about performance. It gave me a rush of confidence that has stuck with me hearing someone support many of my findings and offer additional insight to consider.

That same day, I saw a session by Jeff Foushee on PIVOT/UNPIVOT that actually brought me to tears. There weren’t many in the session to see it, but I’m not afraid to admit it. There was this horrific view in the database of a client I was working with, and for the life of me I could not figure out how to make it less agonizing to manage. It has since been replaced entirely, but that’s neither here nor there. My only real context of pivot was from Excel, and heck if I ever bothered to learn it. I work with databases, not spreadsheets. Little did I know exactly what I was missing out on, how applicable it could be for my development. He explained it so simply by building up from a basic example to something more complex. If you have the opportunity to attend his PIVOT session, please do. That said you should just attend his sessions in general; I can’t imagine that’s the only great one given how simple it was to follow.

Finally, I made new friends. One lady in particular, Kristyna Ferris, has proven to be one of the sweetest, most genuine, and helpful people I know. Not only did I feel like I’d made a fast friend, but she has continued to show up for me. Most recently we had to get a last-minute speaker for my user group. By last minute I mean night before. I don’t know exactly what told me to reach out to her specifically, but I did to ask her if she had any leads for a speaker with a Databricks session in their back pocket. Sure enough, I got my speaker and a great session, Joshua Higginbotham with effectively zero notice. Seeing her at events is always such a joy. It is impossible to feel anything but warm around her, and the little time I do get to spend with her given our geographic distance is a treat. There need to be more people like her, and I hope to be that person to others as my network grows.

A final shout out to everyone who has been a part of the organization, planning, and execution of an event in this great community. Your time is much appreciated and does not go unnoticed. Please keep up the great work you do, and remember: Cleveland Data Rocks.